The Network Capture Playbook Part 2 – Speed, Duplex and Drops

In part one of the playbook series we took a look at general Ethernet setups and capture situations, so in this post (as in all others following this one) I’ll assume you’re familiar with the topics previously discussed. This time, let’s check out how speed and duplex can become quite important, and what “drops” are all about.

Speed & Duplex

There are two duplex modes that we’re used to look at in Ethernet networks:

- Half Duplex, also known as “HDX”. It means only one sender can talk at any given time without problems (which would show up as so called “collisions”)

- Full Duplex, “FDX”. This means that bidirectional communication is possible, so sending and receiving at the same time not a problem.

So what happens if you have one device talking is in FDX mode, and the other only using HDX? Well, the device in FDX mode thinks it can talk whenever it wants to, not realizing it’ll cause collisions for the other device when that one is sending something at the same time. This is called a duplex mismatch. The result is usually a throughput ratio of “augh!!!” (or, to be more specific, result in single number kbyte/s instead of Mbyte/s on 100Mbit links).

Fun fact 1: Auto Negotiation

Sometimes, people think that 10/100 Mbps Auto Negotiation on one side would be smart enough to apply the setting of the other side being configured as a static setting. The usual assumption is “If I set one side to fixed, the other will adjust to the same setting automatically.” So think about this example setup: one side (usually a switch) is configured for 100Mbps full duplex and the other (usually a client PC) configured for auto negotiation. The result of this setting is what? A duplex mismatch:

The reason for this is that the auto negotiation side (in this case the PC) sends its capabilities like “I can do 10Mbps/half. I can do 10Mbps/full. I can do 100Mbps/half. I can do 100Mbps/full.” What does the other side send? Well, its configured for static 100Mbps/full, so it doesn’t feel the need to send anything at all. Which leads to the “auto” side falling back to half duplex, because it has to assume the worst. At least the speed is still detected to be 100Mps, otherwise we’d have a speed mismatch, too, leading to total link failure.

This is why the rule usually is: both sides on auto, or both static. At least until the IEEE 1Gbps specifications came out (IEEE 802.3z), which contained a minor but important change: it forces devices on “fixed” settings to send their speed to the other side, too. This is the main reason why we have far less duplex mismatches these days, moving to Gigabit and faster links.

Fun fact 2: Gigabit half duplex

Yes, there is/was a Gigabit half duplex standard. Rumor has it that this was because even though the engineers knew that half duplex was history, they needed define a half duplex mode for the specifications to be able to stay within 802.3 working group. Which is the “CSMA-CD” group, and the “CD” means “Collision Detect” – which requires half duplex, otherwise there’s no collision 😉

What? He’s still talking about duplex?

I can imagine some of my readers staring at the full/half duplex topic saying “dude, that was something you needed to think about 10 to 15 years ago, but now?! It’s all full duplex!”. First of all, this series was created to pick up beginners. And second of all, let’s ask a simple but very important question for the advanced reader then:

Can you capture a fully saturated 1Gbps full duplex link with a single port 1Gbps full duplex network card?

And the answer is… no. No, you can’t.

And since I am certain that a lot of people will now frown and scratch their heads in disbelief, let’s take a closer look into the matter, because this is important. The key word in my question above is “full” duplex – as we all know by know this means that a device can send and receive at the same time.

So what does it mean if we’re talking about a 1Gbps full duplex link? It means: 1 Gbit per second send rate and 1 Gbit per second receive rate (not 500Mbit/s per direction, as my students in my Wireshark classes sometimes guessed incorrectly). So if we’re talking about a 1Gbps full duplex link we’re in fact looking at a total bandwidth of 2 Gbps (yes, only if it is fully saturated, of course). Same applies to 10Gbps FDX links – they’re in fact 20Gbps. 25Gbps means 50Gbps, 40Gbps means 80Gbps, and 100Gbps is 200 Gbps, if they’re full duplex.

Alright, so why can’t we capture that link with a single port network card? The card is full duplex, too, isn’t it?

Yes. But a capture card only receives packets, it doesn’t send any at all (at least it should not, or, from my point of view, must not). So the “send” bandwidth of the capture card becomes irrelevant, and you’re stuck with 1Gbps receive bandwidth. So no, a 1Gbps capture card isn’t good enough to capture 1Gbps FDX if there is heavy incoming and outgoing traffic at the same time. Its simply a 2Gbps data rate vs. 1Gbps receive rate. This is something we will have to deal with quite a lot later, but if you’re thinking “uh-oh…” right now, you’re on the right track already.

Capturing Preamble and Auto Negotiation info

It’s almost impossible to capture the preamble and start frame delimiter which are sent just before a new Ethernet frame is put on the network. You might be able to see them using an oscilloscope in slow networks (e.g. 10MBit/s), or if you happen to catch a collision happening in a packet (see the previous part of this series).

The reason for that is that your network card only transfers frames to your computer or any kind of device – it doesn’t forward the “management” stuff happening on the physical wire, because it’s not relevant except for the card. So if you want to see any of those bits you need at least a special (read: professional, much more expensive) network capture card – normal consumer network cards won’t let you capture any of it.

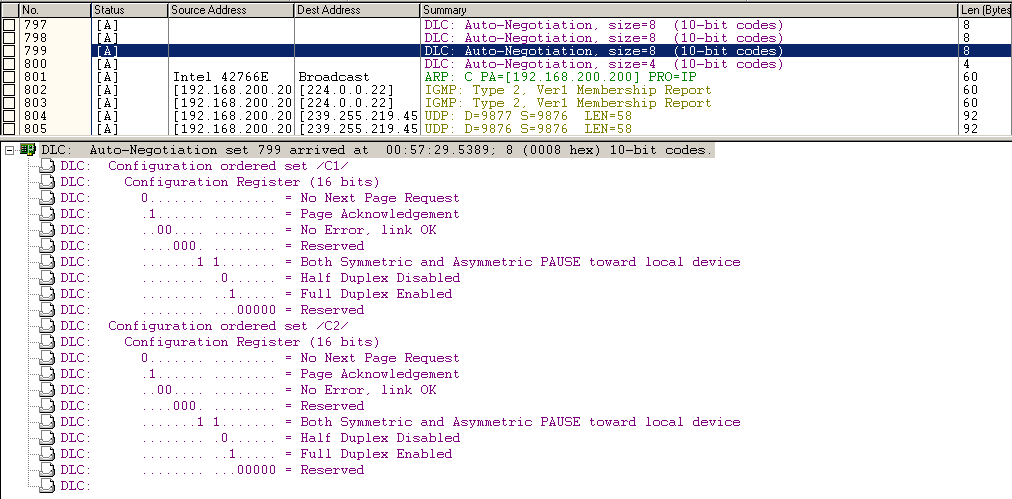

E.g. with a professional capture card and a TAP you might be able to at least catch Auto Negotiation pulses like the following ones (there are many more preceding them, but as you can see this is just before the computer has “link up”), captured with my Network General S6040 sniffer appliance in combination with a fiber optic full duplex TAP:

“Drops”

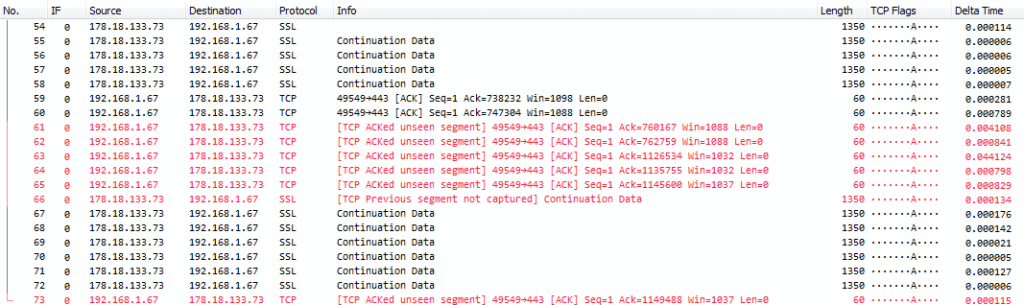

A “dropped” packet is a packet that existed on the network and should have been captured – but wasn’t. The difference to a “lost” packet is that the “lost” packet got destroyed somewhere on the network (read: is really missing on the wire, with “wire” meaning the actual connection link that is used by network devices to communicate with each other) and – in case of TCP – was retransmitted. If a packet is missing in the trace but was in fact present on the wire, it’s a “Drop”. This is what a typical “drop” situation looks like:

The “TCP ACKed unseen segment” message created by the Wireshark TCP analysis module is almost always a hint that you had packet drops during capture: Wireshark has an acknowledge packet for a data packet, but it hasn’t seen the data packet itself anywhere. So something was acknowledged and thus must have been on the wire, but Wireshark didn’t “see” that data. Which means it wasn’t captured. There are only two major reasons why this could have happened:

- Packet Drops, meaning that the capture performance was not high enough to grab all packets

- Asymmetric routing – data packets are taking a different path to the destination than the acknowledge packets, and that different path wasn’t captured on. In this scenario, the missing packets aren’t called drops, and it’s easy to spot, because you’re looking at one-sided communication flows where you don’t have the other side in the capture at all.

More than 95% of the time, packets missing from the capture are caused by reason #1 though – the capture performance wasn’t good enough. By the way, another indicator for drops are “TCP Previous segment not captured” messages that aren’t followed by a retransmission. Think about it 😉

Causes of packet drops

There are multiple possible causes for drops, and all of them can be classified as “a device wasn’t fast enough to handle the amount of packets”. Drops can be caused by a switch, a TAP, a network card, a disk drive or even the CPU and RAM of a PC (e.g. when the capture software isn’t doing its task efficient enough). Or, to sum it all up again: any device or mechanism standing between the packet on the wire and the disk it’s finally written to may cause drops. Some of these are more common than others (Again, dear TAP vendors, watch your blood pressure – we’ll look at TAPs later, clarifying things about drops 😉 )

In general, drops are a problem in some capture situations but more or less irrelevant in others:

Critical drops

Drops are critical in any situation where you need to examine the packets in full detail and cant’ afford to lose any of them. This is often the case in network forensic examinations where content reconstruction is required. E.g. if you don’t have one (or more) packets that are part of a malicious file being transferred you can’t reconstruct and examine it via reverse engineering by a malware analyst anymore. In other situations you might be examining the cause of lost packets – and drops could lead to wrong conclusions, simply because you thought you had packet loss, but it was just a drop.

Slightly problematic drops

Drops may be annoying but not critical if you can work around them in a network troubleshooting situation. Usually this requires analysis skills above average, because the analyst needs to have enough experience to be able to find root cause if drops are involved. They can sidetrack most lesser experienced analysts. An example would be a TCP connection being examined in a “bad performance” scenario where the analyst can live with rare occasional drops because by looking at the acknowledge packets it’s clear they were simply drops, not lost. In a “packet loss” scenario where you have to find a faulty network device that leads to packet drops you cannot afford drops, because it messes with the analysis results – you may not be able to tell if the packet was really lost, or if it was just the capture not performing well enough.

Irrelevant drops

Drops become irrelevant in scenarios where the analyst isn’t interested in being able to look at each and every packet, e.g. in a baselining situation. If you need to create a statistic about something happening on the network (e.g. protocol distribution, “how much of all packets are HTTP”?), you can easily live with dropped packets. They don’t mess with the results that much (well, unless you have tons of them, of course) 🙂

Final Words

Yes, I know, I said in the previous part that I’d look at network cards as well in this part, but I wanted to avoid a very long post, so I pushed that topic back. Otherwise I’d have to keep things short, and I don’t want to do that. Network cards will get their own part now instead.

What you should have learned this time is: drops may be a big or small problem, and full duplex data rates even more so if the link is fully utilized and you only have a single port capture card.

Other parts of this series

Part 1: Ethernet Basics

Part 3: Network Cards

Part 4: SPAN Port In-Depth

Part 5: TAP Basics

Part 6: Planning Network Captures

Hi Jasper,

I still see a lot of duplex mismatches with the devices I support.

From your piece I would conclude NIC’s are used that do not follow 802.3z.

10/100 Mb/s NIC’s fixed to a speed will not send their duplex settings? As they are not 1G/bs NIC’s?

Best regards,

Alcindo

Yes, 10/100MBit cards do not send their settings if set to fixed, so the other side goes to 10/100 half duplex by assuming the worst. This may even apply to GBit NICs that are set to 100MBit fixed, but it may depend on the card.

Thanks, good to know. I will quote you on this 😉

And a lot of switches are not explicit about there negotiation setting if you allow only one speed/duplex setting. (and you can negotiate about speed and duplex and tell the other party that they can choose between 100-fdx or nothing else)

It is some time ago, but a lot of problems with network-boot, intel boards with intel card that want to negotiate, and a cisco switch that was configured not to negotiate.

Hi Jasper

Great post, very helpful indeed and I like your style of writing (easy to follow and connect the dots)

Although I have been in IT for a long time I only recently started getting into WireShark, and right now I am looking at the TCP performance issue. So this particular post is extremely helpful (e.g. is it a drop or a loss). For example, I am getting lots of dup acks (between 50 and 200) and lots of fast retransmissions (or what Wireshark thinks are retransmissions). So the insights you provide in this post (and your previous post) will certainly help 🙂

Ernie

If you get tons of duplicate ACKs and retransmissions, you always need to ask yourself if those are maybe caused by duplicate frames. See this blog post for details: https://blog.packet-foo.com/2015/03/tcp-analysis-and-the-five-tuple/ and get rid of duplicates first before confusing yourself. If you’re sure you don’t have duplicates, continue 😉

Hello Jasper

Thanks very much for the tip, I will take a look at the link you posted above, interestingly this is an MPIO situation more than one path for the packets to take (although they should go via the preferred route),

Thanks again

Ernie